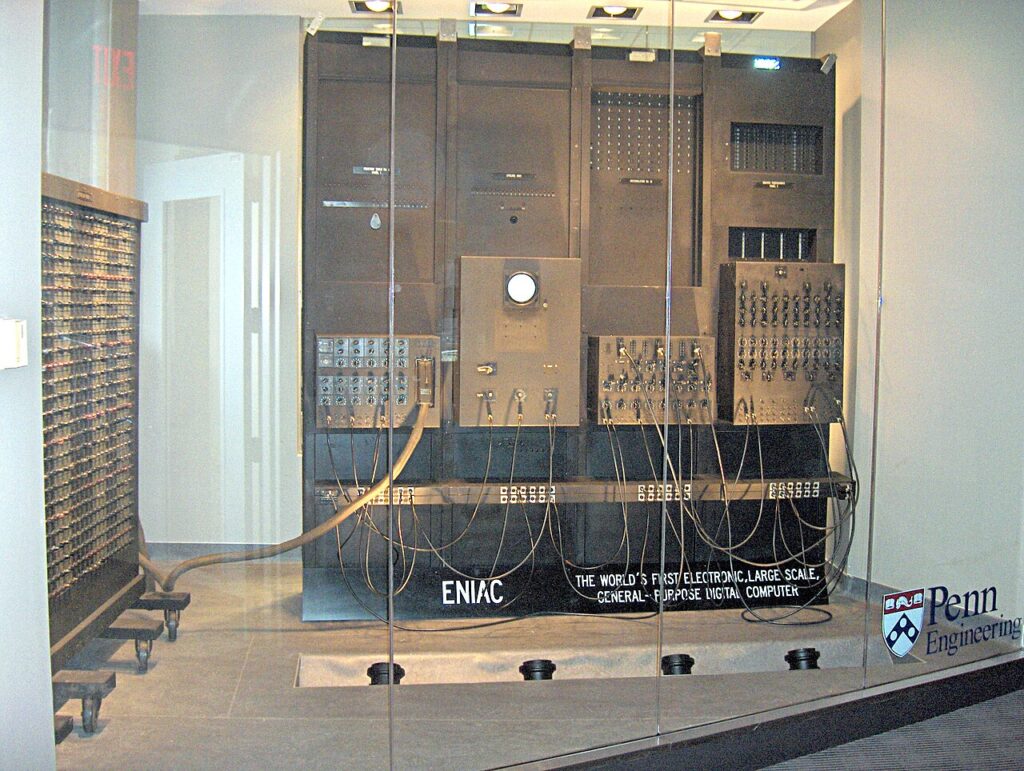

In the previous part of this series, we explored how Turing, Berners-Lee, and advances in computing and neuroscience created a digital ecosystem that connects minds and accelerates knowledge like never before. In this final part, we reflect on science’s triumphant arc – from centuries dominated by superstition and untestable beliefs to an era of evidence-based mastery over nature’s deepest challenges. Where once plagues were divine wrath and comets omens of doom, today vaccines eradicate diseases and telescopes reveal cosmic truths. As we enter 2026, breakthroughs in quantum computing, biotechnology, fusion energy, space exploration, and artificial intelligence exemplify this shift: replacing fear with understanding, prayer with precision, and limitation with limitless potential. These advances promise to conquer illness, secure sustainable power, unveil the universe, and amplify human intellect – yet they remind us that science’s true power lies in its rigorous, self-correcting pursuit of evidence.

From Superstition’s Shadow to Evidence’s Light

For millennia, humanity attributed misfortune to supernatural forces: earthquakes as godly anger, illnesses as curses, celestial events as portents. Prayers and rituals offered solace but no solutions, leaving life precarious and short. Science overturned this worldview through observation, experimentation, and reproducibility – transforming mystery into mechanism.

Today, this contrast is stark. Where medieval societies blamed miasmas or sin for epidemics, modern microbiology and vaccines, rooted in Pasteur’s germ theory, have nearly eliminated smallpox and dramatically curbed measles. Ancient astrologers read fates in stars; now, telescopes like James Webb pierce cosmic dawn, revealing early galaxies, potential biosignatures on exoplanets, and even a new moon around Uranus in 2025 observations.

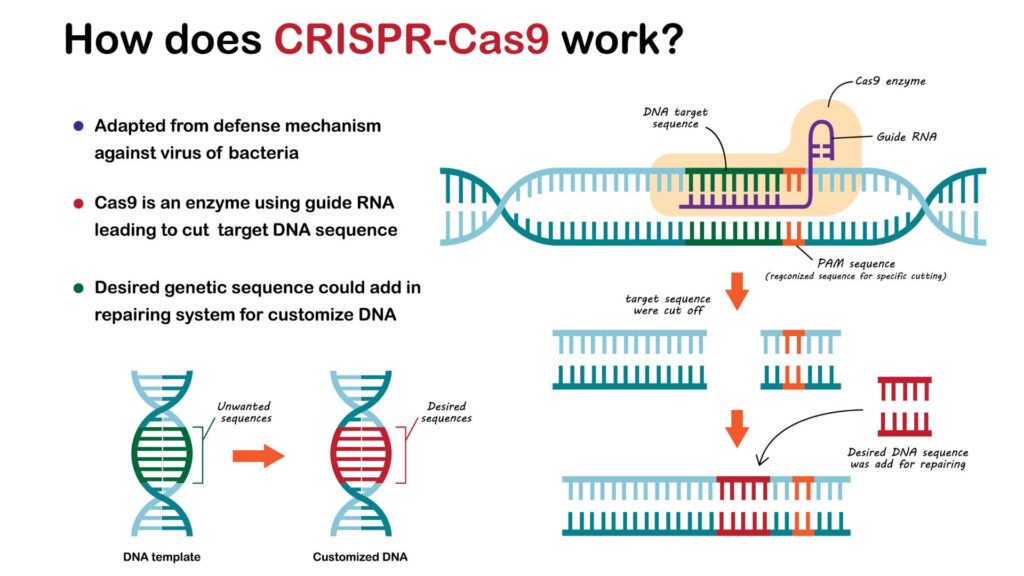

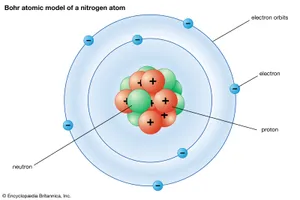

In health, superstition once prescribed bloodletting or charms; evidence now delivers CRISPR therapies. 2025 saw one-time infusions safely slashing cholesterol by over 50% in high-risk patients via gene editing targeting ANGPTL3, while trials advanced cures for sickle cell, amyloidosis, and rare disorders – building on Watson, Crick, and Franklin’s DNA helix. These are not miracles, but measurable outcomes of methodical inquiry.

A New Era of Discovery

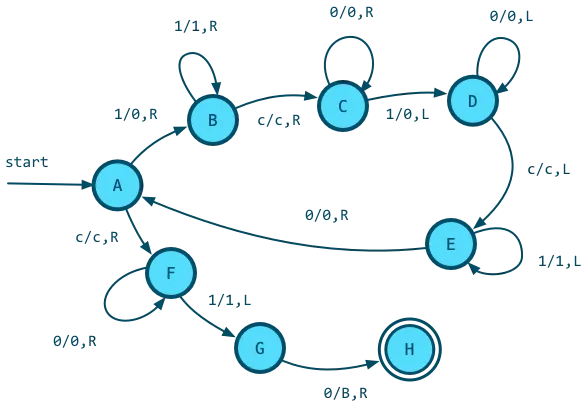

Quantum computing exemplifies progress over prophecy. IBM’s 2025 Nighthawk processor and advancements toward fault-tolerant systems by 2026–2029 promise simulations of molecules for drug design and materials unattainable classically, echoing Curie’s atomic revelations but harnessing them for practical quantum advantage.

Nuclear fusion, long dismissed as eternally distant, advanced decisively: China’s EAST tokamak accessed a “density-free regime” in late 2025, sustaining high-density plasmas for ignition pathways, while global efforts like WEST’s record plasma durations and high-temperature superconductors push toward commercial viability in the 2030s – offering clean, abundant energy without fossil fuels.

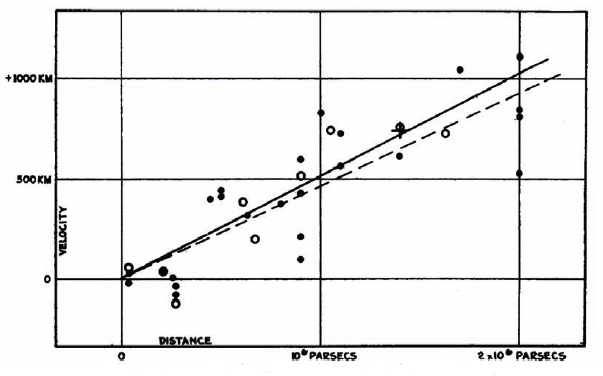

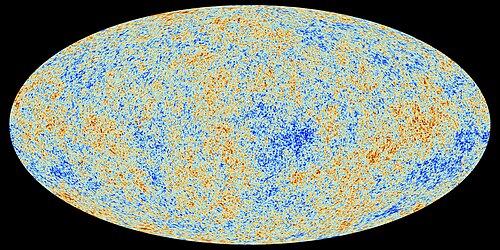

Space discoveries further illuminate: Webb’s 2025 views captured supernovae from the universe’s infancy and carbon-rich exoplanet atmospheres hinting at habitability, extending Hubble and Gamow’s Big Bang insights.

(Credit: livescience.com)

Artificial intelligence accelerates it all. 2025 models excelled on reasoning benchmarks, aiding fusion control, protein design, and hypothesis generation – propelling us toward systems that augment discovery, far from oracles but grounded in data.

From Copernicus repositioning Earth to these revolutions, science has liberated humanity from superstition’s grip, delivering longer lives, interconnected knowledge, and tools to address existential threats. The future gleams with promise: eradicated diseases, limitless clean energy, interstellar exploration, and intelligence beyond biological limits. Yet ethical stewardship—ensuring equitable access, safe AI, and responsible gene-editing demands the same evidence-based rigor.

This series has chronicled science not as arcane facts, but as humanity’s greatest force: dismantling myths, illuminating truths, and empowering progress. Its method – question, test, refine – holds no bounds when guided by curiosity and accountability. In an age where evidence triumphs over enchantment, science lights the way ahead.

Thank you for joining this journey through Science as a Force for Progress.